What Is Data Observability? Use Cases, Pillars & Best Practices

Updated on

Updated on

By Carlos Correa

By Carlos Correa

Carlos Correa

Carlos has been involved in the sales space for well over ten years. He began in the insurance space as an individual sales agent, managing teams as s...

learn more

Carlos Correa

Carlos has been involved in the sales space for well over ten years. He began in the insurance space as an individual sales agent, managing teams as s...

Table of Contents

Table of Contents

Your data pipeline broke at 2 am on a Tuesday. By the time your BI team spotted the wrong numbers in the executive dashboard, three strategic decisions had already been made on bad data. Data observability tools exists so that scenario never plays out.

Key Takeaways:

- Data observability is proactive, continuous monitoring of your entire data stack — not a one-time quality check

- The five pillars of data observability are freshness, distribution, volume, schema and lineage

- Data quality and data observability are related but not the same thing and confusing them leads to gaps in your monitoring strategy

- Observability for data engineering teams is mission-critical when pipelines touch revenue, compliance or customer-facing products

- Ringy gives sales and operations teams a real-time view of their data without having to build a dedicated observability stack

What Is Data Observability?

Data observability is the ability of an organization to fully understand the health, state and reliability of data flowing through its systems at any given moment. It combines automated monitoring, anomaly detection, root cause analysis and data lineage into a continuous feedback loop that flags problems before they reach downstream consumers.

The core problem it solves is data downtime: the periods when your data is missing, erroneous, incomplete or otherwise untrustworthy.

Data downtime is not a minor inconvenience.

According to research published by Actian citing Gartner findings, poor data quality costs organizations an average of $15 million per year. And that figure does not account for the reputational damage or the strategic decisions made on corrupted data before anyone noticed the problem.

The concept of the best data observability rules was originally built around structured data in traditional warehouses.

By 2025 and 2026, it has expanded significantly to cover:

- Unstructured data

- Streaming pipelines

- Cloud-native architectures

- AI model inputs and outputs

The market reflects this momentum.

According to Grand View Research, the global data observability market was valued at over $2.1 billion in 2023 and is projected to reach $4.7 billion by 2030 at a compound annual growth rate of 12.2%. A market does not grow at that pace unless the problem it solves is genuinely painful and widespread.

For sales and operations teams using platforms like Ringy, data observability software translates directly into confidence: confidence that the sales metrics you are reviewing in your dashboard are accurate, that your pipeline reports reflect reality and that decisions made on that data will actually hold up.

Data Quality vs. Data Observability: What's the Difference?

This is one of the most common points of confusion in the data space, and it matters because mixing them up leads to real gaps in how you protect your data.

Think of it this way. Data quality is like checking whether a patient is healthy today. You run a test, you get a result, you either pass or fail. Data observability is like having a continuous heart monitor. It is always watching, it catches anomalies in real time, and it tells you not just that something went wrong but where and why.

More precisely, data testing, data quality monitoring and data observability form a nested set of capabilities. Think of them as Russian dolls.

Data testing sits at the center:

- You write a test

- You run it on demand

- You check a specific condition

Data quality monitoring adds automation and scheduled checks across broader datasets. Data observability wraps around both of them with end-to-end pipeline visibility, lineage tracking, automated root cause analysis and continuous coverage at scale.

Observability does not replace data quality practices. It extends them. You still need good data quality rules and governance. But without observability layered on top, you will always be reactive — fixing problems after they have already caused damage rather than catching them before they propagate.

The table below breaks down the key differences so you can quickly identify which layer applies to a given problem.

|

Dimension |

Data quality |

Data observability |

|

Scope |

Specific datasets or fields |

Entire data pipeline end-to-end |

|

Approach |

Rules-based checks and validation |

Continuous automated monitoring and anomaly detection |

|

Trigger |

Reactive (run on demand or schedule) |

Proactive (always-on, alerts on deviation) |

|

Coverage at scale |

Limited without significant manual effort |

Designed to scale across thousands of tables and pipelines |

|

Typical tools |

dbt tests, Great Expectations, Soda |

Monte Carlo, Datadog, Bigeye, Metaplane, Acceldata |

|

Focus |

Defining and enforcing expected data states |

Understanding and monitoring actual data behavior and health |

|

Measurement |

Pass/fail on predefined rules |

Metrics across freshness, volume, distribution, schema, and lineage |

|

Goal |

Ensuring data meets defined quality standards |

Minimizing data downtime and providing full data context for debugging |

|

Key Metric |

Data quality score/compliance percentage |

Mean Time To Detection (MTTD) and Mean Time To Resolution (MTTR) |

What this table does not show is the organizational cost of relying only on the left column. Research from Monte Carlo found that data teams spend 30 to 40% of their working time handling data quality issues rather than building. That is not a data quality problem.

That is an observability problem.

The 5 Pillars of Data Observability

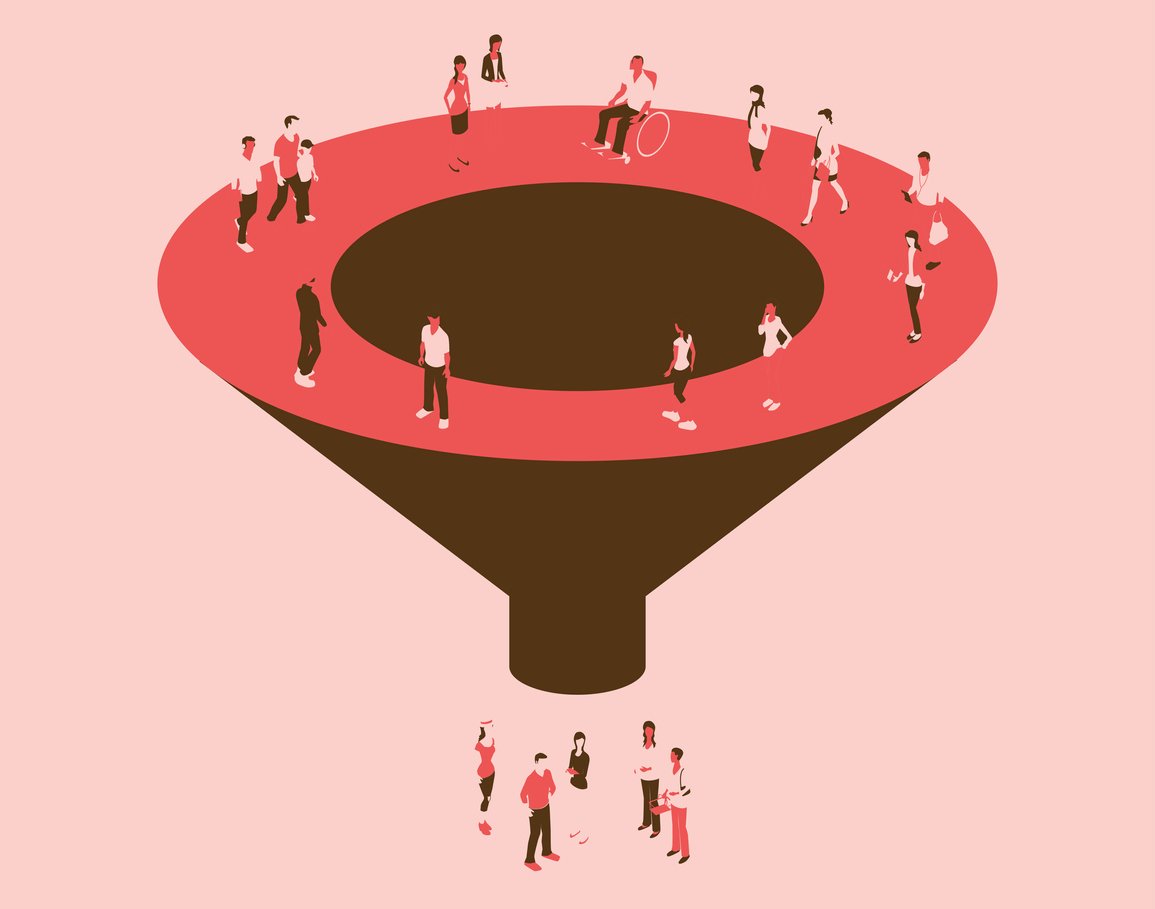

A robust data observability framework does not monitor everything equally. It focuses on five core signals that together give you a complete picture of your data's health across the entire stack.

These are the pillars that leading data observability platforms are built around.

Freshness

Freshness measures whether your data is up to date and whether updates are arriving at the expected cadence. A daily sales report that has not refreshed in 36 hours is a freshness failure. A pipeline that usually lands by 6 am but has not updated by noon is a freshness anomaly.

The challenge with freshness is that stale data often looks fine on the surface. The table is there. The rows are there. The dashboard loads without an error. But the data is from yesterday and nobody knows it.

For teams relying on sales analytics to inform daily decisions, a freshness gap is the difference between acting on current reality and acting on last week's picture.

Distribution

Distribution monitors whether data values at the field level are within expected ranges.

You should ask:

- Are there unusual spikes in null values?

- Has a field that typically contains dollar amounts started receiving string values?

- Has the distribution of a categorical column shifted in a way that suggests an upstream process changed?

Distribution anomalies are especially dangerous in machine learning pipelines, where a subtle shift in input data distribution can silently degrade model performance without throwing a single error.

Volume

Volume monitoring tracks whether the amount of data arriving in a given period matches expected thresholds. A sudden drop in row count, say a pipeline that normally delivers 50,000 records suddenly delivers 3,000, is one of the clearest signals that something has gone wrong upstream.

Volume failures are often the first sign of a broken ingestion process, a failed API call or an upstream source that has changed its output format. Without volume monitoring as part of your data observability metrics, these failures can sit undetected for hours or days.

Schema

Schema observability tracks changes to the formal structure of your data: added or removed columns, data type changes, and renamed fields. Schema changes are among the leading causes of downstream pipeline breakages precisely because they are often made by upstream teams without notifying downstream consumers.

A column gets renamed in a source system. Nobody tells the data engineering team. The pipeline breaks silently.

Three dashboards go blank.

This is a completely preventable category of data downtime, and schema observability is the solution.

Lineage

Lineage answers the two questions that matter most when something breaks: where did it break, and who is affected? Data pipeline observability depends heavily on lineage because, without it, incident response becomes a manual archaeology exercise across dozens of tables and transformations.

With lineage in place, you can trace a broken metric back to its root cause in minutes rather than hours. You can also proactively notify downstream consumers when an upstream change is coming so they are not blindsided.

Modern platforms now extend these five pillars to cover AI inputs and outputs as part of next-generation data observability architecture. As AI models become embedded in business-critical workflows, monitoring the health and consistency of the data feeding those models becomes just as important as monitoring the models themselves.

Data Observability Use Cases: Where Does It Actually Help?

Data observability for data engineering teams is not a nice-to-have when pipelines touch revenue, compliance or customer-facing products. The following use cases illustrate where data observability solutions deliver the most impact.

Analytical Use Cases

The most immediately visible impact of poor data observability is a broken BI dashboard.

An executive opens their weekly performance report, and the numbers are off:

- Maybe a pipeline failed, and nobody caught it

- Maybe a schema change caused a calculation to silently return nulls

Either way, decisions get made on bad data — or trust in the reporting layer collapses entirely.

Data observability prevents this by continuously monitoring the pipelines that feed analytical tools. When something deviates, the right person gets an alert before the wrong person gets the wrong report. For teams already investing in predictive sales analysis, the accuracy of those predictions is only as good as the data flowing into the models.

Operational Use Cases

Real-time pipelines feeding support systems, ecommerce recommendation engines or fraud detection models have no tolerance for data downtime. A fraud detection system fed stale transaction data does not just miss fraud. It flags the wrong transactions and damages customer trust.

Operational data observability focuses on latency, volume and freshness in streaming pipelines where the window between data arriving and decisions being made can be measured in seconds rather than hours.

Customer-Facing Use Cases

For businesses where data is the product — reporting suites, embedded analytics, SLA-backed data feeds — data observability is a contractual requirement as much as a technical one. If you have promised clients that their data will be accurate and available, you need the monitoring infrastructure to back that promise up.

This is increasingly relevant for sales platforms and CRMs. When a team relies on a platform like our sales software CRM for AI marketing analytics and pipeline reporting, they trust that the data powering those views is accurate. Data observability is what makes that trust warranted.

ML and AI Ose Cases

Training a machine learning model on corrupted or drifted data does not produce an error. It produces a model that looks healthy but makes systematically wrong predictions. Data observability for ML pipelines monitors the statistical properties of training data over time and flags when input drift could be affecting model quality.

As AI adoption accelerates across sales, marketing and operations, this use case is moving from specialist concern to mainstream priority very quickly.

The table below maps the primary use case and key benefit by team so you can quickly see where data observability solutions apply to your organization.

|

Team |

Primary use case |

Key benefit |

Additional Metrics/Examples |

|

Data Engineering |

Pipeline health monitoring and incident response |

Reduced mean time to detection and resolution |

Pipeline success rate, data arrival time variance, volume anomalies |

|

Analytics and BI |

Ensuring dashboard and report accuracy |

Fewer bad-data incidents reaching decision-makers |

Report refresh failures, stale data alerts, metric consistency checks |

|

ML Engineering |

Monitoring training data quality and input drift |

Consistent model performance in production |

Feature distribution drift, missing values rate, training-serving skew |

|

Data Governance |

Lineage tracking and compliance documentation |

Audit-ready data provenance at scale |

Data quality score per asset, sensitive data classification rate, access log audit trails |

|

Business Operations |

Reliable operational reporting and forecasting |

Decisions made on current accurate data |

SLA compliance rate for critical reports, data freshness score for real-time applications |

|

Product Management |

Understanding feature adoption and usage |

High confidence in product analytics and A/B test results |

Event data completeness, unique user tracking accuracy, funnel drop-off analysis |

|

Sales & Marketing |

Customer segmentation and campaign personalization |

Improved ROI on outreach and reduced churn |

CRM data completeness, lead score accuracy, campaign attribution correctness |

What stands out in this table is that data observability is not just a data engineering concern. Every team that relies on data to do its job has a stake in whether that data is trustworthy. The engineering team maintains the infrastructure, but the business teams are the ones who feel the consequences when it fails.

Data Observability Best Practices: How to Do It Right

Knowing what data observability is and actually implementing it well are two different conversations. The following best practices reflect how high-performing data teams approach the problem without burning out their engineers or boiling the ocean.

Here is the sequence that works:

- Start with your most critical data products: Identify the data products that are essential for key business operations or decision-making. Prioritizing these ensures that the most impactful data flows are protected first, providing the greatest return on your observability investment.

- Establish baseline metrics first: Before you can detect anomalies, you need to know what "normal" looks like. Define and track core metrics like data volume, schema consistency, field completeness, and freshness (latency) for your critical data products to create a stable baseline.

- Build incident response muscle early: Observability is useless without a plan for action. Define clear roles, communication channels, and processes for when a data incident is detected. Regularly practicing or simulating incident response will speed up resolution times when real issues occur.

- Communicate SLAs to data consumers: Define and publicly share Service Level Agreements (SLAs) for data quality and availability with the teams and applications that rely on your data. This manages expectations and provides a clear standard against which the data team can be held accountable.

- Expand coverage iteratively: Don't try to observe everything at once. Once you have a stable system for your most critical data, gradually expand your observability coverage to less critical data products, adding more detailed metrics and monitoring as you go.

Here is what each step actually involves.

Start With Your Most Critical Data Products

Do not try to monitor everything at once. That is the fastest way to create alert fatigue and kill adoption. Start by identifying the three to five pipelines where data downtime would directly impact revenue, compliance or customer experience. These are your tier-one data products, and they earn the first layer of observability investment.

For a sales team, this might be the pipeline feeding:

- The live pipeline dashboard

- The lead scoring model

For a finance team, it might be the revenue recognition tables. The point is specificity. According to Dataversity's breakdown of the 1x10x100 rule, a data quality issue caught at the point of entry costs one unit of effort to fix.

The same issue caught at the decision-making stage costs 100 units. Starting with critical data products means you are applying observability where the cost of failure is highest.

Establish Baseline Metrics First

Before you can detect an anomaly, you need to know what normal looks like. Set data observability metrics for freshness, volume and schema on your priority pipelines before adding field-level distribution monitors. This gives your observability tooling the context it needs to distinguish a real problem from expected variance.

Baselining also forces a useful conversation: what does healthy actually look like for this pipeline? Getting data producers and consumers to agree on that definition early prevents a lot of downstream confusion about whether an alert is a real incident or a known edge case.

Ringy's sales reports and analytics features operate on this same principle: meaningful reporting requires an agreed baseline before you can measure deviation.

Build Incident Response Muscle Early

Observability tools that alert into a void are just noise generators. From day one, route alerts to the right channels, Slack, PagerDuty or whatever your team actually monitors, and assign clear ownership. Document incident timelines even for minor issues. This builds the organizational habit of treating data incidents with the same seriousness as software incidents.

The teams that get the most value from data observability platforms are the ones that have invested as much in their response workflows as in their monitoring coverage. Detection without response is just a more expensive way of finding out your data is broken.

Communicate SLAs to Data Consumers

One of the most underrated benefits of data observability is what it does for data trust across the organization. When analysts and business stakeholders can see a data health dashboard showing freshness status and incident history for the pipelines they rely on, the number of "why is my dashboard wrong?" messages drops dramatically.

Surface data health information to the teams consuming the data. Set clear expectations about freshness SLAs and communicate proactively when those SLAs are at risk. This transforms observability from a behind-the-scenes infrastructure concern into a visible quality signal that builds organizational confidence in the data stack.

Expand Coverage Iteratively

Once your tier-one pipelines are covered, extend observability to ML pipelines, customer-facing data products and governance use cases. Add field-level distribution monitoring as your baselining matures. Integrate lineage mapping to support root cause analysis at scale.

The keyword is iteratively.

Trying to instrument your entire data stack in a single sprint usually results in misconfigured monitors, too many alerts and rapid disillusionment. Expanding methodically means each new layer of coverage is calibrated against established baselines and has clear ownership before you move on to the next.

Take Control of Your Data Observability

Data observability is the operational infrastructure that turns raw data monitoring from a reactive scramble into a proactive discipline. This article has covered what it is, how it differs from data quality, the five pillars that underpin any serious framework and the use cases and best practices that separate teams who have genuinely solved the problem from those still fighting fires.

The businesses getting the most out of their data in 2026 are not the ones with the most data. They are the ones who know their data is trustworthy and can prove it. Auditing your current data stack for observability gaps is one of the highest-leverage things a data leader can do this quarter.

If you want visibility into your sales and pipeline data without building a dedicated observability stack, the best data observability software gives you key analytics at a glance without having to switch tools.

Request a demo and see what clean, reliable data visibility actually looks like in practice.

Skyrocket your sales with the CRM that does it all.

Calling? Check. SMS? Check. Automation and AI? Check. Effortlessly keep in touch with your customers and boost your revenue without limits.

Take your sales to new heights with Ringy.

Sales in a slump? Ringy gives you the tools and flexibility you need to capture leads, engage with them, and turn them into customers.

Subscribe to Our Blog

Enter your email to get the latest updates sent straight to your inbox!

Categories

Related Articles